Should we automate our creativity?

Suddenly, it feels like we’re living in a sci-fi film from decades past. Content professionals are experiencing first hand all of the curiosity, concern, and cautious optimism as artificial intelligence steps from fiction into reality.

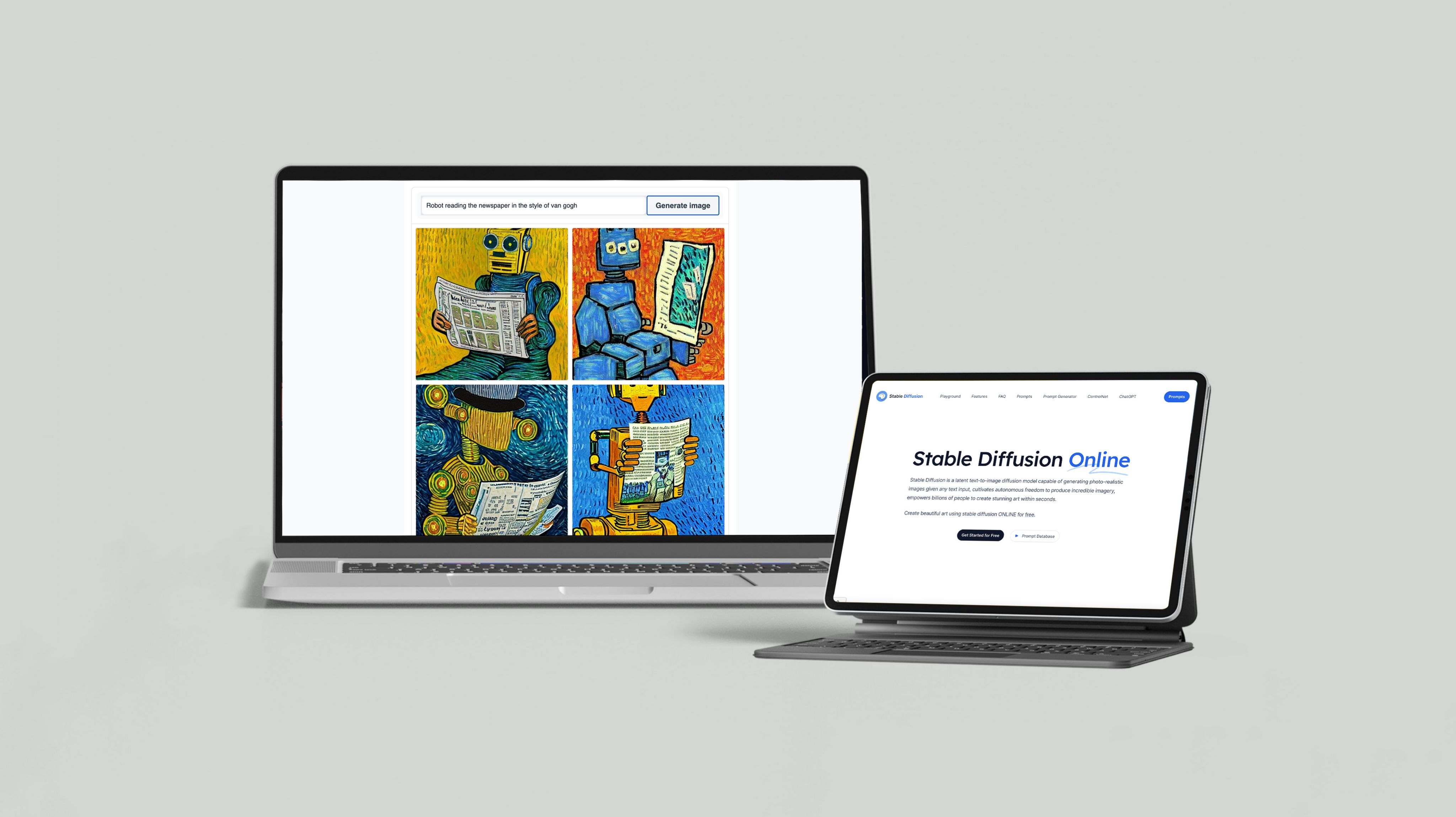

Specifically, I’m speaking of the seemingly-sudden boom in access to generative AI tools like OpenAI’s ChatGPT, MidJourney, Stable Diffusion, and more.

The divide among content creators is strong. Enthusiasts post about the possibilities of AI, while notable masthead CNET has garnered negative press over posting AI-generated content without clear attribution. Meanwhile, Buzzfeed has publicly announced that it intends to lead the future of AI-powered content. In the announcement, Founder and CEO Jonah Peretti wrote:

If the past 15 years of the internet have been defined by algorithmic feeds that curate and recommend content, the next 15 years will be defined by AI and data helping create, personalise, and animate the content itself. Our industry will expand beyond AI-powered curation (feeds), to AI-powered creation (content). (source)

The handbook of acceptable use of AI in content creation doesn’t yet exist — and I can’t help but feel like we’re penning its pages in real time.

As a content professional who sits in the camp of ‘cautiously optimistic’, I decided to lean into this curiosity and ask the experts about the state of play in generative AI.

Feeling curious, too? Join me as I explore:

What do the BBC, Tripadvisor, and Penguin have in common?

They craft stunning, interactive web content with Shorthand. And so can you! Create your first story — no code or web design skills required.

Sign up now.

The trajectory of generative AI in marketing

Although long-term players have been tracking the growth of AI tools like ChatGPT since 2017, there’s no denying the seismic societal shift in attitudes and access to generative AI tools of late.

From the influx of brightly-coloured Lensa profile pictures in social feeds, and the wild and wonderful visual stories generated using the likes of MidJourney (looking at you, John Oliver), to the myriad professional use cases across content, coding, and more, AI feels like it’s here to stay.

It’s more than just a feeling — the data agrees, too. The global generative AI industry is expected to reach a market value of US$63.05 billion by 2028, according to a new report by Sky Quest. Microsoft invested $10 billion in OpenAI in January 2023, adding to the previous $1 billion in funding given in 2019 and 2021.

But despite this extraordinary support from industry, questions remain around how broadly AI-generated content will be used in practice. When it comes to the AI tech boom, it’s hard to know what’s next, without understanding what else is possible now.

What actually is generative AI? And what can it create?

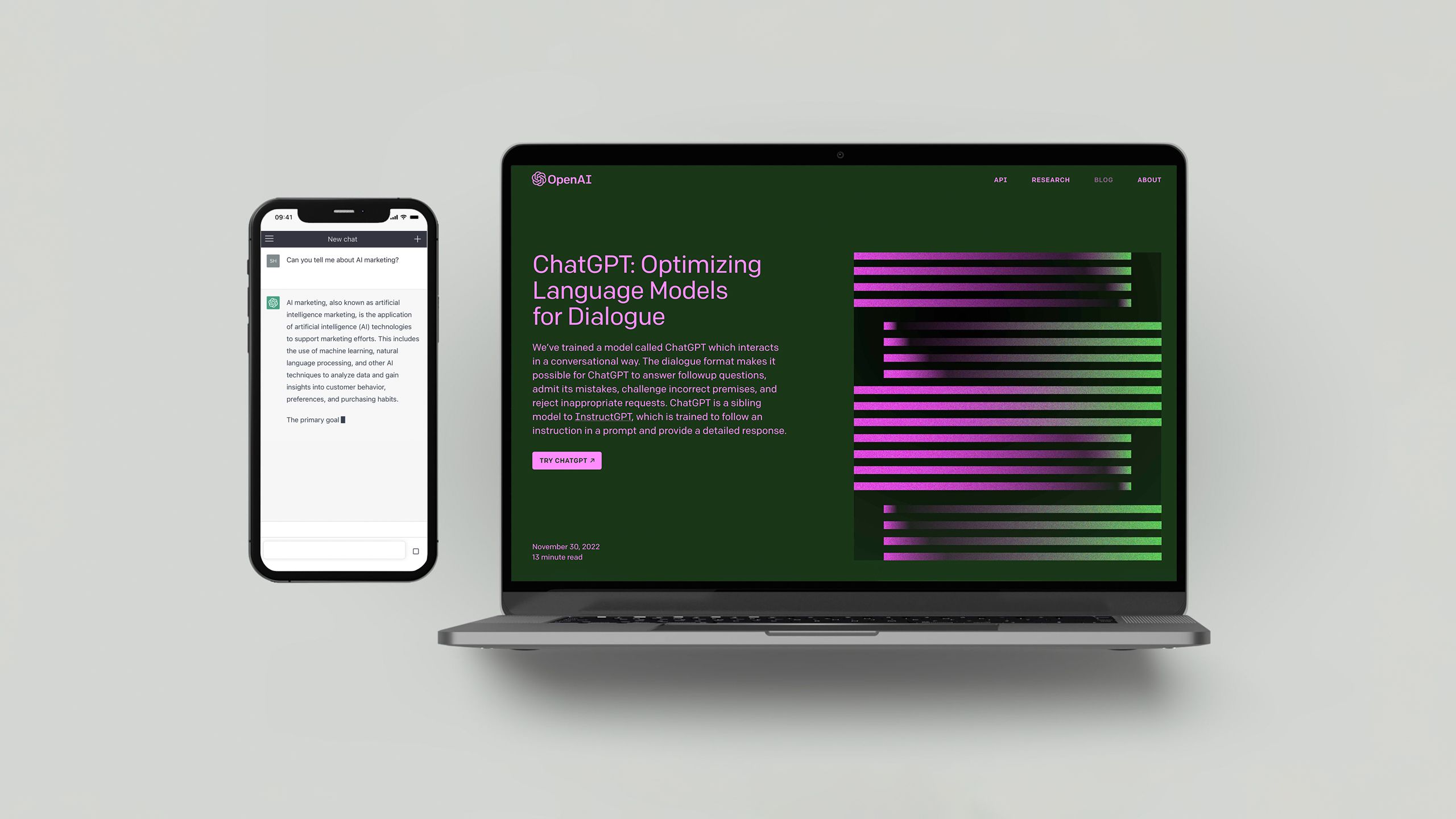

To better understand what generative AI is, and dig into its capabilities and limitations, I decided to interview ChatGPT. But ChatGPT isn’t the most charismatic talent out, so, here’s our abridged conversation.

The tool describes generative AI as “artificial intelligence models that can generate new data, such as text and images, based on a set of input data or a set of rules.”

What surprised me, however, were the more novel outputs that generative AI are capable of creating.

Speech (i.e. voice creation and cloning), music composition, 3D models and design prototypes, art, handwriting, and even animation are all options — for example, the curious AI replica of Seinfeld called ‘Nothing, Forever’ streaming 24/7 via Twitch.

The show’s creators recently felt the limitations of AI firsthand when their language model suddenly began to behave abnormally, before generating skits with transphobic content. Twitch moved quickly to ban the show until further notice.

Unfortunate? Yes. But according to ChatGPT, not entirely unexpected.

The limitations of generative AI tools

During our conversation, ChatGPT explained that its key limitations include a lack of control around what it generates, poor quality outputs, and the prohibitively high computing power required to train, build, and maintain these tools.

ChatGPT also divulged some of its more complicated limitations — data bias, legal blurred-lines, and ethical concerns.

Data bias is when the training dataset doesn’t accurately represent the actual data, which means that any biases will appear in AI-generated outputs. For example, this could — and has — lead to problematic racial and gender biases in AI-generated content.

Further, the use of copyrighted or trademarked content being used as training data for AI tools has led to legal issues, since this intellectual property can then be reconfigured into works that very closely resemble the work of dedicated artists, writers, and creatives.

And ethical concerns highlight the important point that AI tools are very capable of creating harmful or malicious content — without warning, without prompting.

Ethics, copyright, and AI content

Before digging into the research, I didn’t realise the complexities and real-world consequences associated with AI tools. To help untangle these further, I spoke with Dmytro Kondratiev, International Lawyer and Legal Board Advisor at LLC.Services.

He explains that in terms of AI and copyright, a key difficulty is that AI-generated works aren’t always easily traceable to a specific creator.

“This can lead to difficulties in identifying the rights holder and obtaining licences for use. For organisations using AI to create works, it’s important to have systems in place to properly document and track the creation process,” Kondratiev says.

“It’s important for AI creators to have processes in place to ensure that any commercial use of AI-created works is properly licensed and that any royalties are distributed to the appropriate parties.”

But what could this process look like in reality? According to Kevin Indig, a strategic growth advisor, large content platforms may opt to train their own models to give them a competitive advantage.

If big content platforms train their own AI models on their content, each of them could release a ChatGPT-like interface that competes with OpenAI, Stability AI, Jasper, and others. For example, stock photo sites would compete with DALL-E 2, Stable Diffusion and other generative AI providers; Wikipedia and Quora would compete with ChatGPT and other AI chatbots.

If creator platforms were to join the competition, would this mean greater rights for creators, too? It’s hard to know. In this emerging field of law, Kondratiev urges us to consider the moral rights of the creators — in particular, the right to privacy.

“Since AI is incapable of having moral feelings, it may be difficult to reconcile the right to integrity of a work created by AI with the moral rights of the human creator whose work was used to teach the AI.”

Despite these moral grey-areas, the possibilities of generative AI have caught the attention of content creators who are keen to find faster, easier ways to get copy onto the page.

How content marketing teams are using AI tools in their production workflow

Broadly speaking, the sentiment held by content professionals towards AI tools is… complicated. And much of this complication can be attributed to where in the production process AI tools are being employed.

Keen to know how and why these tools are being used IRL, I asked content professionals who use AI tools to walk me through their production workflow.

Omniscient Digital

According to Alex Birkett, Co-founder at Omniscient Digital, it’s best to think of generative AI as your helpful (but not always skilful) content assistant.

“These tools are incredible productivity boosters if you use them as an assistant, not a master.”

“For me, they reduce the time I spend stewing on an idea and facing the blank page. I'll use Jasper or ChatGPT to give me some bullet points or ideas, and often the ideas are bad, but I can angle against them. It's like a writing prompt,” Birkett says.

The time savings that AI tools afford are a key motivator for Birkett, who says that they can reduce friction at certain points in the production process, and ultimately help increase their team’s content outputs.

“It's a force multiplier when used correctly. The brands who are hoping this will replace their human writers and strategists will lose. The brands who use it to augment their capabilities will win.”

Redfox Visual

For content professionals working in smaller teams, AI tools allow them to keep pace as project demands scale up. This is certainly the case for Rachel Macfarlane, a content marketing strategist.

“AI not only helps us write creative captions, blog posts, and emails, but allows us to save tremendous amounts of time that would otherwise have required additional manpower,” Macfarlane says.

“By leveraging the power of AI, we can generate an infinite number of ideas from any given concept — ensuring creativity and originality in our work. This helps us maintain the quality of our services while meeting increasing customer demands without the need for adding personnel or additional costs.”

Macfarlane says that AI tools have expedited the process of generating ideas for their client’s monthly social media calendars, which frees her content team up to focus on higher-level strategy.

“We input the subject of an image along with a corresponding keyword, then after clicking the ‘generate’ button, we can select the most suitable version supplied by the tool and make any edits if necessary,” Macfarlane says.

“This allows us to create relatable material both quickly and efficiently, while also avoiding any potential copywriting mistakes. Before, it took almost half the day to complete all of our clients' social media calendars, and now it only takes a couple hours.”

Animalz

In a recent episode of Shorthand’s The Craft podcast, Ryan Law, VP of Content at Animalz, enthused about AI tools and their impacts on the content marketing industry.

“A lot of the way I'm using these tools is, I will create an article outline, do keyword research, the ideation, the bigger content structure that this lives within. I then generate the text within my structure, and then I, the skilled human, edit it, as well.”

“So there's the possibility that we will use these tools and our jobs will change, but they will change for the better; we will all become strategists that do slightly higher-leverage stuff. These tools can't interview people. It can't have original experiences. It can't write thought leadership.”

“There's lots of parts of content marketing that aren't fun. I know from my own experience; I've written many articles over the years that were an absolute slog, and there wasn't a huge amount of skill that went into it,” Law explains.

“If I could delegate that to a bit of AI and focus on the stuff that I’m going to enjoy, I think I'm not going to feel too bad about that outcome.”

Conclusion

To be able to create at-speed, with seemingly infinite iterations delivered within moments, feels powerful. I remain cautiously optimistic. Cautious about how real-life creators — the writers, artists, designers, coders, photographers, and musicians whose works might be used in training data — will be impacted by AI now and in years to come.

Yet I'm optimistic about how generative AI tools will make creativity more agile and accessible. Solo creators and small teams are already able to scale their content efforts like never before. One can only imagine what's next.

Still, as we take tentative steps into a future with AI, one urgent question remains. We’ve gained the power to automate creativity: but at whose loss?